Tools for testing Functional Web Apps

by Taylor Beseda

on

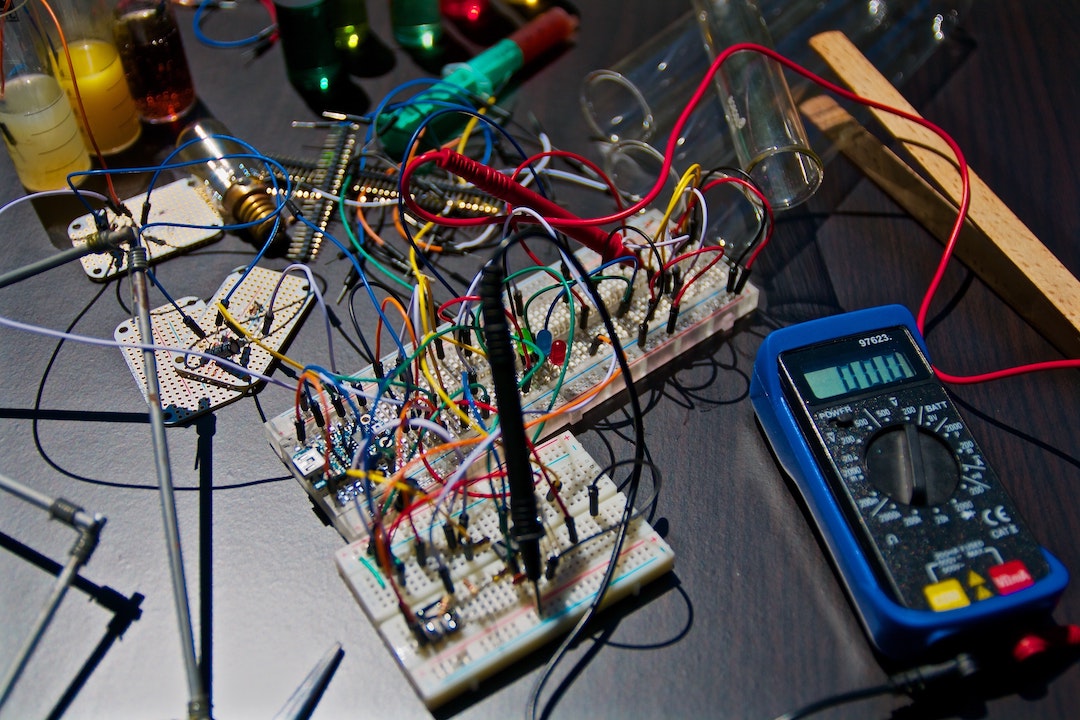

Photo by Nicolas Thomas

Photo by Nicolas Thomas

If you’re building critical cloud functions to return API results, handle evented business operations (like Shopify webhooks), or render web views, you’ll want to incorporate some tests. It’s essential to test their internals, inputs, and outputs in a predictable context. We want a utilitarian toolchain to ensure core services function as expected. Where each test can run in isolation, in an unmodified Node.js context. The test suite should run quickly and deterministically; helpful in local development and ideal in CI, where computing resources might be limited.

Our tests should be proportionate to our functions in scope and size. Ideally, tests are fast and small, just like the services they’re testing. (We’re not building fat functions, right?)

For the sake of brevity, this discussion is limited to a Node.js runtime, but the principles are the same for other environments. Additionally, we won’t worry about testing user interfaces or varying browser environments; those utilities are another post entirely.

So what’s a good approach? Which libraries should be candidates?

A comparison

Several frameworks with performant runners help execute atomic tests, even concurrently. Some important considerations are library capabilities (like assertions), package size, maturity, and level of maintenance. Let’s look at a collection of the most popular, up to date modules on npm today:

| Library | Size | Concurrent | Version | Updated |

|---|---|---|---|---|

| Ava | 281 kB | Yes | 3.15.0 | 2021-11-01 |

| Jasmine | 47 kB | No | 3.10.0 | 2021-10-13 |

| @hapi/lab | 160 kB | Yes | 24.4.0 | 2021-11-09 |

| Mocha | 3.8 MB | Yes | 9.1.3 | 2021-10-15 |

| Node Tap | 28.3 MB | Yes | 15.1.5 | 2021-11-26 |

| tape | 248 kB | No1 | 5.3.2 | 2021-11-16 |

| uvu | 46 kB | No | 0.5.2 | 2021-10-08 |

1. achievable with tape-esque libraries like mixed-tape

A note about Jest

“But where’s Jest?” you ask. Don’t get me wrong, I understand the appeal of a framework with so many pleasantries. Jest’s feature-set is impressive and battle-tested. Unfortunately, tools like Jest, in order to accomplish so much, are opinionated. Jest uses implicit globals and its own context. It may not execute code the same way our servers will. This pattern can require all sorts of configuration bloat and transpilation, making debugging (especially in CI) tedious. In my view, Jest is not appropriate for what we’re testing.

Unpacked module size

Emphasis on sizes > 1 MB in the above table is intentional.

Since we’re running our tests in a cloud environment (in addition to locally), disk space matters.

Unfortunately, the library that most appeals to me, Node Tap, is just too large. At 28 MB, tap isn’t very portable and will occupy a large part of allotted space in an environment like AWS Lambda. Hopefully, this limitation won’t always be an issue, but it’s an important factor for now.

A recommended testing “stack”

I think any of the above options are viable, depending on your use case and preference. For example, if BDD is preferable, jasmine has you covered. ava has excellent TypeScript support. uvu is super fast and works with ESM. And if you’re looking for staying power, mocha has been around for nearly a decade!

For us at Begin and Architect, tape has been in use for several years. tape has a stable and straightforward API, routine maintenance updates, and outputs TAP, making it really versatile. While TAP is legible, it’s not the most human-readable format. Fortunately, several TAP reporters can help display results for developers. Until recently, Begin’s TAP reporter of choice was tap-spec. Sadly tap-spec wasn’t kept up to date and npm began reporting vulnerabilities.

A new TAP reporter

Enter tap-arc. Heavily inspired by tap-spec (a passing suite’s output is nearly identical), tap-arc is a minimal, streaming TAP reporter with useful expected vs. actual diffing. We’re still improving the package, but it’s definitely on par with tap-spec.

Feedback?

I’m super interested in what others are doing in this realm. How are you testing cloud functions? What factors are important when selecting test utilities? Do you test in the same environment you’re deploying to? Let us know on Twitter: @begin.